How To Develop Secure Software – Action Plan To Make Secure

The purpose of this article is to help to develop secure software. Easily avoided software defects are a primary cause of commonly exploited software vulnerabilities. By identifying insecure coding practices and developing secure alternatives, software developers can take practical steps to reduce or eliminate vulnerabilities while developing software product.

According to a study released last year by WhiteHat Security, Cross-Site Scripting regains the number one spot after being overtaken by Information Leakage last year in all but one language. .Net has Information Leakage as the number one vulnerability, followed by Cross-Site Scripting. ColdFusion has a rate of 11% SQL Injection vulnerabilities, the highest observed, followed by ASP with 8% and .NET 6%. Perl has an observed rate of 67% Cross-Site Scripting vulnerabilities, over 17% more than any other language. There was less than a 2% difference among the languages with Cross-Site Request Forgery. Many vulnerabilities classes were not affected by language choice.

The most effective way to reduce application security risk is to implement a formal development process that includes security best practices to avoid application vulnerabilities. Secure Development process and Security Testing are powerful tools to monitor and search for application flaws and they should be used together to increase the security level of business critical applications/software.

Secure coding is the practice of writing code for applications in such a way as to ensure the confidentiality, integrity and accessibility of data and information related to those systems. Programmers fluent in secure coding practices can avoid common security flaws in programming languages and follow best practices to avoid the number of targeted attacks that focus on application vulnerabilities.

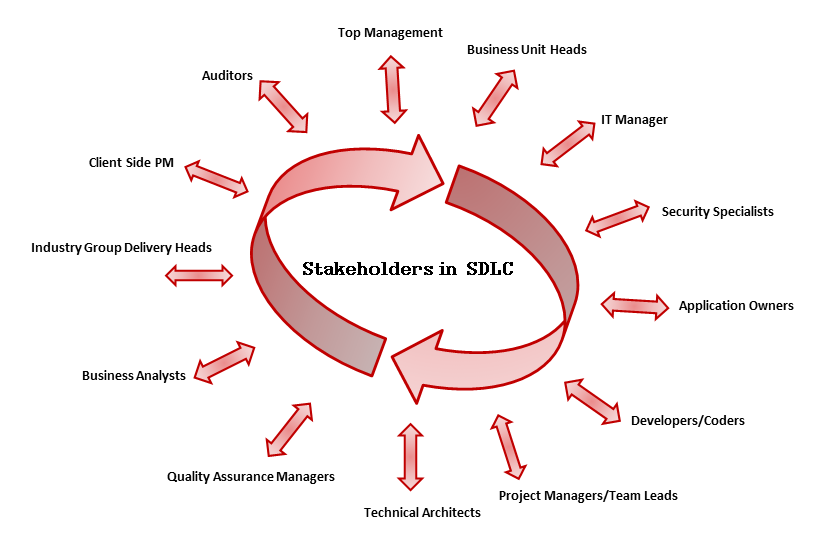

Application security is a process that begins from the application development lifecycle to ensure the highest security possible of the development process (coding), the system, hardware the application runs on and the network it uses to connect, authenticate and authorize users. Building secure software and incorporating security best practices in development process is the responsibility of all the stakeholders involved with the Software Development Lifecycle (SDLC).

Figure: SDLC Stakeholders

INTRODUCTION

The current trend is to identify issues by performing a security assessment of applications after they are developed and then fix these issues. Patching software in this way can help, but it is a costlier approach to address the issues.

This cycle of Testing – Patching – Re-testing runs into multiple iterations and can be avoided to a great extent by addressing issues earlier in the Life Cycle. This next section covers a very important aspect – the need for programs like S-SDLC.

As an old saying goes – “Need is the mother of invention” – this is applicable for Secure software development as well. There were days when organizations were just interested in developing an application and selling it to the client and forgetting about rest of the complexities. Those days are gone.

A very simple answer to the question is – “The threat landscape has changed drastically.” There are people out there whose only intention is to break into computer systems and networks to damage them, whether it is for fun or profit. These could be novice hackers who are looking for a shortcut to fame by doing so and bragging about it on the internet. These could also be a group of organized criminals who work silently on the wire. They don’t make noise but when their job is done, it reflects into a huge loss for the organization in question – not to mention a huge profit for such criminals.

This is where secure development comes into the picture. While employing a team of ethical hackers helps, having processes like secure development can help organizations in addressing the above discussed issues in a much more cost-efficient manner as identifying security issues earlier in the development life cycle reduces the cost.

Want to build secure application and keep your application from getting hacked? Here’s a guideline to build secure application and how to get serious about secure apps.

Let’s get serious about building secure Web applications.

THINGS YOU NEED TO DEVELOP SECURE SOFTWARE

1. AUTHENTICATION AND AUTHORIZATION:

Don’t hardcode credentials: Never allow credentials to be stored directly within the application code.

Example: Hard coded passwords in networking devices https://www.us-cert.gov/control_systems/pdf/ICSA-12-243-01.pdf

Implement a strong password policy: A password policy should be created and implemented so that passwords meet specific strength criteria. Implement account lockout against brute force attacks: Account lockout needs to be implemented to guard against brute forcing attacks against both the authentication and password reset functionality. After several tries on a specific user account, the account should be locked for a period of time or until manually unlocked.

2. SESSION MANAGEMENT:

Invalidate the session after logout: When the user logs out of the application the session and corresponding data on the server must be destroyed. This ensures that the session cannot be accidentally revived.

Implement an idle session timeout: When a user is not active, the application should automatically log the user out. Be aware that Ajax applications may make recurring calls to the application effectively resetting the timeout counter automatically.

Use secure cookie attributes (i.e. httponly and secure flags): The session cookie should be set with both the HttpOnly and the secure flags. This ensures that the session id will not be accessible to client-side scripts and it will only be transmitted over SSL, respectively.

3. INPUT AND OUTPUT HANDLING/VALIDATION:

Prefer whitelists over blacklists: For each user input field, there should be validation on the input content. Whitelisting input is the preferred approach. Only accept data that meets a certain criteria. For input that needs more flexibility, blacklisting can also be applied where known bad input patterns or characters are blocked.

Use parameterized SQL queries: SQL queries should be crafted with user content passed into a bind variable. Queries written this way are safe against SQL injection attacks. SQL queries should not be created dynamically using string concatenation. Similarly, the SQL query string used in a bound or parameterized query should never be dynamically built from user input.

Use CSRF tokens to prevent forged requests: In order to prevent Cross-Site Request Forgery attacks, you must embed a random value that is not known to third parties into the HTML form. This CSRF protection token must be unique to each request. This prevents a forged CSRF request from being submitted because the attacker does not know the value of the token.

Validate uploaded files: When accepting file uploads from the user make sure to validate the size of the file, the file type, and the file contents as well as ensuring that it is not possible to override the destination path for the file.

Validate the source of input: The source of the input must be validated. For example, if input is expected from a POST request, do not accept the input variable from a GET request.

X-XSS- Protection headers: Content Security Policy (CSP) and X-XSS-Protection headers help defend against many common reflected Cross-Site Scripting (XSS) attacks.

4. ACCESS CONTROL:

Apply the principle of least privilege: Make use of a Mandatory Access Control system. All access decisions will be based on the principle of least privilege.

Don’t use direct object references for access control checks: Do not allow direct references to files or parameters that can be manipulated to grant excessive access. Access control decisions must be based on the authenticated user identity and trusted server side information.

Use only trusted system objects, e.g. server side session objects, for making access authorization decisions. Use a single site-wide component to check access authorization. This includes libraries that call external authorization services.

5. PROPER ERROR HANDLING AND LOGGING:

Error messages should not reveal details about the internal state of the application. For example, file system path and stack information should not be exposed to the user through error messages.

Logs should be stored and maintained appropriately to avoid information loss or tampering by intruder. Log retention should also follow the retention policy set forth by the organization to meet regulatory requirements and provide enough information for forensic and incident response activities.

Do not disclose sensitive information in error responses, including system details, session identifiers or account information.

Logging controls should support both success and failure of specified security events.

6. DATA PROTECTION:

Ideally, SSL should be used for your entire application. If you have to limit where it’s used, then SSL must be applied to any authentication pages as well as all pages after the user is authenticated. If sensitive information (e.g. personal information) can be submitted before authentication, those features must also be sent over SSL.

Implement least privilege; restrict users to only the functionality, data and system information that are required to perform their tasks.

Encrypt highly sensitive stored information, like authentication verification data, even on the server side. Always use well vetted algorithms, see “Cryptographic Practices” for additional guidance.

7. BUSINESS LOGIC:

Business logic vulnerability is one that allows the attacker to misuse an application by circumventing the business rules. Most security problems are weaknesses in an application that result from a broken or missing security control.

This will help to identify the minimum standard that is required to neutralize vulnerabilities in your critical applications. Phases been addressed? Have you made all the proper configuration settings in the database, web server, etc.?

ACTION PLAN TO DEVELOP SECURE SOFTWARE

These help organizations to think about security early on in the project. They represent specific security goals and constraints that affect the confidentiality, integrity and availability of important application data and the means by which that is accessed. If these requirements aren’t specified they won’t be built or tested.

“The key problem is that, at the network level, data used to exploit security flaws is often indistinguishable from legitimate application data. Our only hope is to tackle vulnerabilities at their root – in the applications themselves.”

STEP 1. THREAT MODELLING:

Threat modelling is an approach for analysing the security of an application. It is a structured approach that enables you to identify, quantify, and address the security risks associated with an application. Threat modelling is not an approach to reviewing code, but it does complement the security code review process. The inclusion of threat modelling in the SDLC can help to ensure that applications are being developed with security built-in from the very beginning.

There are several good reference guides to help with threat modelling, including a free threat modelling reference from the National Institute of Standards and Technology (NIST).

STEP 2. ARCHITECTURE AND DESIGN REVIEW PROCESS:

The architecture and design review process analyses the architecture and design from a security perspective. If you have just completed the design, the design documentation can help you with this process. Regardless of how comprehensive your design documentation is, you must be able to decompose your application and be able to identify key items, including trust boundaries, data flow, entry points, and privileged code. You must also know the physical deployment configuration of your application. Pay attention to the design approaches you have adopted for those areas that most commonly exhibit vulnerabilities. This guide refers to these as application vulnerability categories. There are many excellent resources available for code reviews including those from Microsoft.

STEP 3. GET DEVELOPERS SECURITY-SAVVY:

The most effective solution is to train developers from the beginning on secure coding techniques. The securitysavvy software developer leads all developers in the creation of secure software, implementing secure programming techniques that are free from logical design and technical implementation flaws. This expert is

ultimately responsible for ensuring customer software is free from vulnerabilities that can be exploited by an attacker. At the implementation stage, security is largely a developer awareness problem. Given a specific requirement or design, code can be written in a vast number of ways to meet that objective. Each of these can lead to an application that meets its functional requirements.

By implementing these secure coding standards in your organisation and enforcing them through secure code reviews and static analysis tools, you can suppress one of the most common causes of released vulnerabilities in software.

“Security design reviews give an early insight into potential problems, as does threat modelling, and both can be performed early where security defects are easier and less costly to fix.”

STEP 4. PENETRATION TESTING:

Penetration testing (also called pen testing) is the practice of testing a computer system, network or Web application to find vulnerabilities that an attacker could exploit. Pen tests can be automated with software applications or they can be performed manually. Either way, the process includes gathering information about the target before the test (reconnaissance), identifying possible entry points, attempting to break in (either virtually or for real) and reporting back the findings.

This puts the application through the paces of a series of attacks. Output from the threat models can be reused here to establish the focus and scope of the testing. For example, did the tester try a series of SQL injection attacks vs. Cross Site Scripting? Did the tester attack the user interface vs. the database server?

Figure: Penetration Testing

STEP 5. FINAL SECURITY REVIEW:

This step is conducted prior to deployment and is the last look in the mirror before walking out the door. Have all those weak spots found in the threat modeling and penetration testing.

SECURITY PRINCIPLES FOR SECURE CODE DEVELOPMENT

1. MINIMIZE ATTACK SURFACE AREA:

Every feature that is added to an application adds a certain amount of risk to the overall application. The aim for secure development is to reduce the overall risk by reducing the attack space.

2. PRINCIPLE OF LEAST PRIVILEGE:

The principle of least privilege recommends that accounts have the least amount of privilege required to perform their business processes. This encompasses user rights, resource permissions, such as CPU limits, memory, network, and file system permissions.

3. PRINCIPLE OF DEFENSE IN DEPTH:

The principle of Defense in Depth suggests that where one control would be reasonable, more controls that approach risks in different fashions are better.

Controls, when used in depth, can make severe vulnerabilities extraordinarily difficult to exploit and thus unlikely to occur. With secure coding, this may take the form of tier-based validation, centralized auditing controls, and requiring users to be logged on all pages.

4. FAIL SECURELY

Applications regularly fail to process transactions for many reasons. How they fail can determine if an application is secure or not. External systems are insecure. Many organizations utilize the processing capabilities of third-party partners, who more than likely have differing security policies and posture. It is unlikely that an external third party can be influenced or controlled; implicit trust of externally run systems is not warranted. All external systems should be treated in a similar fashion.

5. SEPARATION OF DUTIES

A key fraud control is separation of duties. For example, someone who requests a computer cannot also sign for it, nor should they directly receive the computer. This prevents the user from requesting many computers, and claiming they never arrived. Certain roles have different levels of trust than normal users. In particular, Administrators are different to normal users. In general, administrators should not be users of the application.

6. DO NOT TRUST SECURITY THROUGH OBSCURITY

Security through obscurity is a weak security control, and nearly always fails when it is the only control. This is not to say that keeping secrets is a bad idea, it simply means that the security of key systems should not be reliant upon keeping details hidden.

7. SIMPLICITY

Attack surface area and simplicity go hand-in-hand. Certain software engineering fads prefer overly complex approaches to what would otherwise be relatively straightforward and simple code. Developers should avoid the use of double negatives and complex architectures when a simpler approach would be faster and simpler.

8. FIX SECURITY ISSUES CORRECTLY

Once a security issue has been identified, it is important to develop a test for it, and to understand the root cause of the issue. When design patterns are used, it is likely that the security issue is widespread amongst all code bases, so developing the right fix without introducing regressions is essential.

THE TEN BEST PRACTICES

1. Protect the Brand Your Customers Trust

2. Know Your Business and Support it with Secure Solutions

3. Understand the Technology of the Software

4. Ensure Compliance to Governance, Regulations, and Privacy

5. Know the Basic Tenets of Software Security

6. Ensure the Protection of Sensitive Information

7. Design Software with Secure Features

8. Develop Software with Secure Features

9. Deploy Software with Secure Features

10. Educate Yourself and Others on How to Build Secure Software

BENEFITS OF SECURE SDLC

1. Build more secure software

2. Help address security compliance requirements

3. Reduce costs of maintenance

4. Awareness of potential engineering challenges caused by mandatory security controls

5. Identification of shared security services and reuse of security strategies and tools

6. Early identification and mitigation of security vulnerabilities and problem

7. Documentation of important security decisions made during the development

CONCLUSION:

Security and development teams can work together—they just need to look for common areas in which they can make improvements. Security teams focus on confidentiality and integrity of data, which can sometimes require development teams to slow down and assess code differently. At the same time, business units require developers to produce and revise code more quickly than ever, resulting in developers focusing on what works best instead of what is most secure.

This difference in focus does not mean that either side is wrong. In fact, both teams are doing exactly what they’re supposed to do. However, in order to facilitate teams accomplishing both sets of goals and working together more fluidly, changes to tools and processes are necessary.

REFERENCES:

1. http://info.whitehatsec.com/rs/whitehatsecurity/images/statsreport2014-20140410.pdf

2. https://www.owasp.org/images/7/7c/OWASP-Building-Secure-Web-Apps-070110.pdf

3. https://www.us-cert.gov/control_systems/pdf/ICSA-12-243-01.pdf

4. https://www.owasp.org/index.php/The_Owasp_Code_Review_Top_9

5. https://www.owasp.org/index.php/Testing_for_authentication

6. https://www.owasp.org/index.php/Web_Application_Security_Testing_Cheat_Sheet

7. https://www.owasp.org/index.php/Application_Threat_Modeling

AUTHOR:

Security Consultant,

Varutra Consulting, Pvt. Ltd.